| December 2026: |

Gina Yuan*, Thea Rossman*, Michael Welzl, and KW,

In-Network Retransmissions for Encrypted Transport

Protocols, CoNEXT 2026

The earlier Sidekick paper showed how to do

network-originated acknowledgments for an encrypted transport

protocol, using some fancy (for us) math. In this paper, Gina and Thea

show how to do network-originated retransmissions for an

encrypted transport protocol, using even fancier math and some

manipulation of the end-to-end selective acknowledgments.

|

| April 2026: |

Yuhan Deng, Akshay Srivatsan, Sebastian Ingino, Francis Chua, Yasmine Mitchell, Matthew Vilaysack, and KW

Fix: externalizing network I/O in serverless computing, EuroSys 2026

Our group is working on a new kind of operating system that treats

"computational truths" as first-class objects and guarantees every

result is reproducible. The goal is to be able to include theorems

about results (e.g. "this program, run in this environment, produces

this output") in the filesystem, be able to combine these theorem

objects into larger claims, and reproduce/share these results across

the network. We'd like it to be impossible for a program, even an AI

program, to claim a computational result that isn't true. It can be a

lot more efficient to run software expressed in this representation,

because making computation reproducible means making its I/O visible

to the underlying infrastructure, which usually permits better

placement and scheduling and avoids blocking. Ultimately we're

trying to commoditize cloud computing and produce an environment we'd

be comfortable with AI playing in.

|

| March 2026: |

Emilee Chapman and I designed an all-freshman-class activity for

Stanford's COLLEGE 102 class (Citizenship in the 21st Century),

modeled on the old "writing rotisserie" at Harvard Law School.

The class of 2029 wrote about 310 original policy memos in groups of

four or five students, negotiated in class to coalesce those into 109

"refined" memos (across 77 sections of the class), then anonymously

peer-reviewed eight inbound memos from other sections, ranking the #1

and #2 memos in each batch, all seeking to build a classwide consesus

around: "How should the Stanford community promote learning, reduce

cheating, and conduct accurate assessments of student learning in the

age of artificial intelligence?" The authors of the

winning memo got to discuss their proposal over lunch with Stanford's provost, vice provost, and others and they were very frank with them!

|

| July 2025: |

Gina Yuan, Thea Rossman, and KW,

Internet Connection Splitting: What’s Old is New Again, USENIX ATC 2025

In the 1990s, many networks deployed performance-enhancing proxies

(PEPs) that transparently split TCP connections to aid performance,

especially over lossy, long-delay paths. Two recent developments have

cast doubt on their relevance: the BBR congestion-control algorithm,

which de-emphasizes loss as a congestion signal, and the QUIC

transport protocol, which prevents transparent connection-splitting

yet empirically matches or exceeds TCP’s performance in wide

deployment, using the same congestion control. In light of this, are

PEPs obsolete? This paper presents a range of emulation measurements

indicating: “probably not.” While BBR’s original 2016 version didn’t

benefit markedly from connection-splitting, more recent versions of

BBR do and, in some cases, even more so than earlier “loss-based”

congestion-control algorithms.

|

| November 2024: |

Reducing WebAssembly's RAM overheads to (close to) the bare minimum. Joint work with Dominik Tacke and our wasm2c/WABT co-contributors.

|

| April 2024: |

Gina Yuan, Matthew Sotoudeh, David K. Zhang, Michael Welzl, David Mazières, and KW,

Sidekick: In-Network Assistance for Secure End-to-End Transport Protocols, NSDI 2024

(won Community Award and Outstanding Paper Award).

The challenge: old transport protocols (like TCP) benefit from

performance-enhancing proxies (PEPs) that transparently

split TCP connections in the network. But these proxies have

prevented TCP from adding new features. To prevent this, new

transport protocols (like QUIC) are fully encrypted—no proxy can

transparently meddle with them. This guarantees evolvability at the

cost of performance.

Gina's paper shows a way to get the best of both worlds:

- It may be possible to create a “universal PEP” that

works with arbitrary, pre-existing transport protocols (even fully

encrypted ones). The PEP assists endpoints over an adjacent

“sidekick” connection.

- The core technical challenge: how can the PEP efficiently refer to ranges of packets of a fully encrypted connection. Some

linear algebra makes this possible, compromising between size on the

wire, computation for the proxy and endpoint, and ability to recover

from loss.

- Once endpoints are getting information from the PEP, there are some fun questions about how that should influence

their behavior. We created a "path-aware" congestion-control scheme

whose response to packet loss considers where loss occurred

along the path. When assisted by a universal PEP, it's possible for an

endpoint emulate the performance of "split" TCP pretty closely.

|

| June 2023: |

"Four Steps to the Apocalypse": A talk at the 60th anniversary of MIT's Project MAC and 20th of the creation of MIT CSAIL---focusing on alternative "existential" threats from AI (sociological, disciplinary, and "consolidationalist").

|

| November 2022: |

Yuhan Deng, Angela Montemayor, Amit Levy, and KW, Computation-Centric

Networking, HotNets 2022.

Yuhan is leading a project to create a new kind of OS and an

environment for computation that we call "computation-centric

networking." The core principles are a separation between I/O and

compute (with delineated nondeterminism), a common notion of

correctness, and fine-grained visibility into application dataflow.

This suggests an end-to-end argument for serverless computing,

shifting the service model from “renting CPUs by the second” to

“providing the unambiguously correct result of a computation.”

Accountability to these higher-level abstractions could permit agility

and innovation on other axes. (The full conference version of this

work was published in 2026.)

|

| November 2022: |

Gina Yuan, David K. Zhang, Matthew Sotoudeh, Michael Welzl, and KW,

Sidecar: In-Network Performance Enhancements in the Age of Paranoid Transport Protocols, HotNets 2022

Is it possible to get the anti-ossification benefits of a fully

encrypted transport protocol (like QUIC), and without changing

anything about the protocol's wire format, also get the

benefits of in-network acceleration? Probably! (The full conference

version of this work was published in 2024.)

|

| August 2022: |

Sadjad Fouladi, Brennan Shacklett, Fait Poms, Arjun Arora, Alex Ozdemir,

Deepti Raghavan, Pat Hanrahan, Kayvon Fatahalian, and KW,

R2E2: Low-latency Path Tracing of

Terabyte-scale Scenes Using Thousands of Cloud CPUs,

SIGGRAPH 2022.

Sadjad, Brennan, and co-authors created a gigantic ray-tracing engine

using swarms of thousands of cloud CPUs fired up in a few seconds, in

order to render truly gigantic film-scale scenes (with a terabyte of

geometry and texture data) interactively on demand.

|

| January 2022: |

Luke Hsiao, Brooke Krajancich, Philip Levis, Gordon Wetzstein, and KW,

Towards Retina-Quality VR Video

Streaming: 15 ms Could Save You 80% of Your Bandwidth, Computer Communication Review 52, 1 (January 2022). (Stanford press release here.)

Luke and Brooke built a "gaze-contingent" foveated UHD video

compression/streaming system with a total latency (from eye motion to

responsive photons) of about 14 ms. This includes the latencies from

eye-tracking (~1.5 ms), video encoding (~3 ms), video decoding (~2

ms), HDMI video scanout (~3.5 ms), and physical transition of the LCD

(~4 ms), but doesn't include network latency. Luke then ran a user

study (under strict COVID protocols) to evaluate the available

benefit, in terms of compressed video bitrate, if the server only

sends high-quality video where the user's gaze is pointing and

low-quality video elsewhere.

The bottom line is that if you can get the latencies down to

this level, you can achieve a roughly 5× reduction in bitrate

over the current state-of-the-art before the foveated compression

becomes noticable. But the user-study results suggest there is an

unfortunate "cliff"—at the eye-motion-to-photon latency of

current VR HMDs (~80 ms), or even a hypothetical improved HMD with 45

ms, the available bitrate savings were much more limited before users

become annoyed by the sharp region noticably snapping into place. Only

at the lowest achievable latency (14 ms) did we observe a substantial

savings. The shape of the curve between 14 ms and 45 ms, and what the

highest tolerable latency is that still permits a substantial bitrate

savings from foveated compression, will have to wait for future

studies.

|

| December 2021: |

Zhixiong Niu, Qiang Su, Peng Cheng, Yongqiang Xiong, Dongsu Han, KW, Chun Jason Xue, and Hong Xu. 2021. NetKernel: Making Network Stack Part of the Virtualized Infrastructure. IEEE/ACM Transactions on Networking.

Earlier: Zhixiong Niu, Hong Xu, Peng Cheng, Qiang Su, Yongqiang Xiong, Tao Wang, Dongsu Han, and KW,

NetKernel: Making Network Stack Part of the Virtualized Infrastructure,

USENIX ATC, July 2020.

Even earlier: Zhixiong Niu, Hong Xu, Dongsu Han, Peng Cheng, Yongqiang Xiong, Guo

Chen, and KW, Network Stack as a Service in the

Cloud, ACM HotNets, Palo Alto, Calif., November 2017.

What if VMs interacted with the outside world not via a virtual NIC,

but through stream sockets, with the TCP implementation provided by

the host?

|

| June 2021: |

Colleen Josephson, Manikanta Kotaru, KW, Sachin Katti and Ranveer Chandra, Low-cost In-ground Soil Moisture Sensing with Radar Backscatter Tags, ACM SIGCAS Conference on Computing and Sustainable Societies (COMPASS ‘21).

Colleen created a new way of measuring soil moisture (important for

agriculture, especially re: irrigation) efficiently, by putting the

expensive part in a handheld reader that measures the time-of-flight

to “dumb” backscatter tags embedded in the soil. The work

involved a lot of building electronics, digging up different kinds of

soils across the Bay Area and Central Valley to test with, and

persuading Stanford's environmental scientists that her technique

wasn't crazy. The predicted-vs.-actual link budget turned out really

close—check out Figure 6!

|

| March 2021: |

Working with my student Sadjad Fouladi, and our collaborators Michael

Rau (Theater and Performance Studies), Tsachy Weissman (EE), and

Dustin Schroeder (Geophysics), we operationalized Sadjad's earlier

“Salsify” research into "Stagecast," an effort to produce

the best possible videoconferencing system for live theater and music

during the pandemic.

In the fall of 2020, we co-taught a class for undergraduates and

master's students:

CS 349T / EE 192T: Video and

Audio Technology for Live Theater in the Age of COVID where our

students developed out the system into a usable tool. Then in the

winter of 2021,

we rehearsed

and eventually held

three live performances

in March 2021, including four new plays performed by a cast of

five student actors, plus three musical numbers performed by members

of the Wet Ink Ensemble from New York City. The performances were

enabled by a backstage crew of seven student technicians who used

our software to adjust camera focus/framing/brightness, audio levels,

and shot selection in real time, advised by the instructional staff from

the TAPS department.

One innovation in the Stagecast system is a new media transport

protocol that allows multiple reconstructions of the same incoming

stream of packets. Each musician sends out their audio (ideally) once

over a reliable transport protocol. The priority of original

vs. retransmitted packets is sensitive to the low-latency needs of the

stream. At the receiver side, the stream is reconstructed multiple

times with different latency thresholds: once to produce a low-latency

(but low-tolerance-for-jitter) feed, and again 100 ms later to produce

a higher-latency, higher-quality version of the same incoming

packets. The first feed goes to the other musicians; the second feed

is used by the backstage mixers and eventually for the audience.

|

| November 2020: |

Emily Marx, Francis Y. Yan, and KW, Implementing BOLA-BASIC on Puffer: Lessons for the use of SSIM in ABR logic.

As part of her master's work, Emily implemented the BOLA-BASIC ABR

scheme on our Puffer site and evaluated it for 17.7

“stream-years” (about three real-world months) in a

randomized trial with other ABR schemes. She found some curious

behavior emerging from BOLA's objective function and worked with the

BOLA authors to tune the algorithm better. Emily's results suggest

that the tuning constants inside these ABR schemes may often drive their

real-world performance, more than the smarts or sophistication of the

algorithms themselves.

|

| August 2020: |

Tong Li, Kai Zheng, Ke Xu, Rahul Arvind Jadhav, Tao Xiong, KW, and Kun Tan,

TACK: Improving Wireless Transport Performance by Taming Acknowledgments,

SIGCOMM 2020.

Tong Li and I met and had dinner together when I visited Huawei in 2018, and

we talked about Tong's efforts with his Huawei and Tsinghua colleagues

to design a new acknowledgment scheme for TCP. Tong ended up

developing TACK in part by iterating its design on

our Pantheon of Congestion Control, a testbed

that included nodes with cellular connectivity around the world and,

until 2020, ran automated bake-offs every week and published the

resulting packet traces. Tong deployed 16 different versions of the

TACK scheme on the Pantheon as the system collected many gigabytes of

packet traces throughout 2018 and 2019. The TACK scheme is now

deployed for real on Huawei's smart TVs and Mate 20 smartphones.

|

| February 2020: |

Francis Y. Yan, Hudson Ayers, Chenzhi Zhu, Sadjad Fouladi, James Hong, Keyi Zhang, Philip Levis, and KW,

Learning in situ: a randomized experiment in video streaming, Usenix NSDI 2020,

Santa Clara, Calif., 2020 (won Community Award and Applied Networking Research Prize).

Francis and our co-authors set up a website that streamed 38+ years of

video to 63,500+ users over the course of a year; we found that

variability, and therefore statistical uncertainty, is bigger than had

been documented (calling into question the reliability of some past

research in this area), and that "dumb" ABR schemes may be able to

outperform some more-sophisticated schemes. We describe a way of

learning in place (in situ) that appears to alleviate, at least

partly, some of the pitfalls that have afflicted other ML

approaches. The website is still live, and we welcome contributions

from researchers who would like to evaluate or develop new algorithms

for ABR selection, throughput prediction, or congestion control. We

also welcome contributions from viewers who would like to contribute

to the experiments by watching

TV.

|

| October 2019: |

John Emmons, Sadjad Fouladi, Ganesh Ananthanarayanan, Shivaram Venkataraman, Silvio Savarese, and KW,

Cracking open the DNN black-box: Video Analytics with DNNs across the Camera-Cloud Boundary,

Workshop on Hot Topics in Video Analytics and Intelligent Edges (HotEdgeVideo 2019),

Los Cabos, Mexico.

With our collaborators at Microsoft, we explored outsourcing

computer-vision computations given three constraints: limited compute

power (or actual power!) on a device, limited communications capacity

off the device, and limited tolerance for end-to-end loss (e.g.,

classification mistakes). To me, one of the biggest contributions our

paper made may have been in the axes: the idea that

(given equal end-to-end accuracy, or at least an equal

tolerance for loss) there is a tradeoff between the need for local

compute (on the x-axis, above) and communication (y-axis), and that

schemes should be evaluated in this way and viewed as part of a

tradeoff space.

|

| July 2019: |

Sadjad Fouladi, Francisco Romero, Dan Iter, Qian Li, Shuvo Chatterjee, Christos Kozyrakis, Matei Zaharia, and KW,

From Laptop to Lambda: Outsourcing Everyday Jobs to Thousands of Transient Functional Containers,

USENIX ATC, Renton, Wash., 2019.

Framework to "functionalize" everyday tasks (e.g., software compilation,

unit tests, video encoding, object recognition, ...) and, with their

data-dependencies well-described, offload them efficiently to quick

bursts of 5,000+ lambda functions executing in parallel. Imagine

expressing workflows in, auto-converting existing workflows into, a sort of Parallel-Haskell-like

representation. This paper tries to bust the myths that S3 is slow for

small files (it depends on the client implementation), lambdas are

slow to start en masse (it depends on the client

implementation) and that AWS Lambda forbids direct network communication

between workers (NAT-traversal

techniques can connect them). More practical info is in

the followup

paper in Usenix ;login: magazine.

|

| November 2018: |

Kalev Alpernas, Cormac Flanagan, Sadjad Fouladi,

Leonid Ryzhyk, Mooly Sagiv, Thomas Schmitz, and KW,

Secure serverless computing

using dynamic information flow control, Proc. ACM

Program. Lang. 2, OOPSLA, Article 118 (November 2018).

How do you reason about information flow in a complex application of

interconnected “serverless” functions? One answer is that

if the application expresses its jobs in a functional content-addressed way (as in our

gg system), enforcing

and reasoning about information flow control becomes almost trivial.

|

| July 2018: |

Francis Y. Yan, Jestin Ma, Greg Hill, Deepti Raghavan, Riad

S. Wahby, Philip Levis, and

KW, Pantheon: the training

ground for Internet congestion-control research, USENIX ATC ’18, Boston, Mass., July 2018 (won Best Paper award).

The Pantheon is a community evaluation platform for academic

research on congestion control. It includes a curated collection of

working implementations of congestion-control schemes, a testbed of

measurement nodes on wired and cellular networks, a collection of

network emulators (each calibrated to match the performance of a real

network path or to capture some form of pathological network

behavior), and a continuous-testing system that evaluates the Pantheon

protocols over the real Internet between pairs of testbed nodes and

publicly archives the resulting packet traces and analyses. Pantheon

evaluations have assisted congestion-control research that appeared at NSDI 2018

(Copa

and Vivace),

ICML 2019 (Aurora), and SIGCOMM 2020 (TACK).

|

| April 2018: |

Sadjad Fouladi, John Emmons, Emre Orbay, Catherine Wu, Riad

S. Wahby, and

KW, Salsify:

low-latency network video through tighter integration between a video

codec and a transport protocol, in USENIX NSDI ’18,

Renton, Wash., April 2018 (won Applied Networking Research Prize).

Salsify is a new design for real-time Internet video that jointly

controls a video codec and a network transport protocol. Current

systems (Skype, Facetime, WebRTC) run these components independently,

which produces more glitches and stalls when the network is

unpredictable. In testing, Salsify consistently outperformed today’s

real-time video systems in both quality and delay.

|

| November 2017: |

Dmitry Kogan, Henri Stern, Ashley Tolbert, David Mazières, and KW,

The Case For Secure Delegation, ACM HotNets, Palo Alto,

Calif., November 2017.

Dima and Henri developed and released an open-source tool,

called Guardian

Agent, that performs secure ssh-agent forwarding for SSH and Mosh

in a backwards-compatible way.

|

| November 2017: |

Michael Schapira and

KW, Congestion-Control

Throwdown, ACM HotNets, Palo Alto, Calif., November 2017.

Michael (as Hamilton) and I (Burr) were stuck on an airplane

together and found ourselves at loggerheads about Internet congestion

control. We put our disagreement to good use by collaborating on a

throwdown-in-the-form-of-a-paper (and later an actual throwdown, at

HotNets 2017).

|

| June 2017: |

Judson Wilson, Riad S. Wahby, Henry Corrigan-Gibbs, Dan Boneh, Philip Levis, and KW, Trust but Verify: Auditing the Secure Internet of Things, MobiSys 2017.

A way of using TLS that can allow the owners of IoT devices to

learn what their own devices are saying about them

to the cloud, without compromising the integrity of encrypted communications.

|

| March 2017: |

Sadjad Fouladi, Riad S. Wahby, Brennan Shacklett, Karthikeyan Vasuki Balasubramaniam, William Zeng, Rahul Bhalerao, Anirudh Sivaraman, George Porter, and KW, with demo by John Emmons, Encoding, Fast and Slow: Low-Latency Video Processing using Thousands of Tiny Threads, NSDI 2017,

Boston, Mass., March 2017.

We think ExCamera started the movement to (mis-)use cloud-functions

services for massively “burst-parallel” data processing.

The system achieves low-latency video processing by combining a purely

functional implementation of a VP8 codec (to allow parallelization at

granularities smaller than the interval between key frames) with a

framework that starts thousands of tiny jobs on AWS Lambda at once,

each processing a small segment of the video.

|

| March 2017: |

Daniel Reiter Horn, Ken Elkabany, Chris Lesniewski-Laas, and KW,

The Design, Implementation, and Deployment of a System to Transparently

Compress Hundreds of Petabytes of Image Files For a File-Storage Service,

NSDI 2017, Boston, Mass., March 2017 (won Community Award).

We added transparent recompression of JPEGs to the Dropbox back-end

fileservers, compressing more than 200 petabytes of user data by about

23 percent. To achieve this, we created a purely functional

implementation of the JPEG DC-predicted Huffman coder and adapted the

VP8 format, to be able to resume compression and decompression at the

arbitrary boundaries between prespecified filesystem blocks. The

system is about 9x faster, and within 1 percentage point of the

compression efficiency, of the best prior work. It

is available as free

software.

|

| March 2017: |

In case professoring doesn't work out, I have developed a side hustle

as critic of the Unicode Technical Committee's current approach to

standardizing emoji. I was interviewed

for The Nib's 2017 "Who Makes

Emoji" comic and for episodes 1–4

of Mark Bramhill's "Welcome to

Macintosh" podcast (also condensed into an episode of "99%

Invisible"). I also spoke at length in a 2019 Dutch documentary

and was in "The Emoji Story" (also released as "Picture Character").

|

| October 2016: |

In 2007, an academic cardiologist downloaded 42 medical studies from

the Web site of drug giant GlaxoSmithKline, combined them in a

meta-analysis, and found that Avandia, the world's best-selling diabetes drug,

caused heart attacks. GSK lost about $12 billion in sales and market

value. But a different way to analyze the same data—a “Bayesian”

way—finds that the drug actually reduces heart attacks. Or does it?

We often hear of this conflict, between Bayesian and

“frequentist” statistics. But much of the conflict is

misguided. Viewed formally, on the same axes, the two schools of

statistics turn out to share a tight symmetry. Criticisms of each can

be transformed into a corresponding criticism of the other.

Slides

from talk given at University of Chicago (January 2009),

U.T. Austin (April 2011), MIT CSAIL (October 2013), Boston Children's

Hospital (October 2013), Harvard Medical School (February 2014),

MongoDB Inc. (October 2016). Also written version of the main

section of the talk.

|

| June 2016: |

Amit Levy, James Hong, Laurynas Riliskis, Philip Levis, and KW,

Beetle:

Flexible Communication for Bluetooth Low Energy, MobiSys 2016,

Singapore, June 2016.

Amit figured out and implemented a cool way to interpose on Bluetooth Low Energy

to allow multiplexing device services to multiple applications

at the same time,

with fine-grained access control.

|

| July 2015: |

Ravi Netravali, Anirudh Sivaraman, Somak Das, Ameesh Goyal, KW,

James Mickens, and Hari

Balakrishnan, Mahimahi:

Accurate Record-and-Replay for HTTP, in USENIX ATC 2015, Santa

Clara, Calif., July 2015.

Mahimahi is a series of cascading network emulators, each one

modeling one aspect of a network path (delay, independent per-packet

loss, autocorrelated loss or intermittency, varying bottlneck link

capacity with a specified queue discipline, etc. Each one opens a

container and affects processes launched within that container, and

the emulators can be nested arbitrarily inside each other to build

up a chain of emulated effects. Mahimahi is included in Debian

and Ubuntu and has been used in a number of network research studies.

|

| August 2014: |

Anirudh Sivaraman, KW, Pratiksha Thaker, and Hari Balakrishnan, An

Experimental Study of the Learnability of Congestion Control, in

SIGCOMM 2014, Chicago, Ill., August 2014.

Working with my colleagues Anirudh Sivaraman and Pratiksha Thaker,

we used the Remy automatic protocol-design program as a tool to

investigate the “learnability” of the Internet

congestion-control problem: how easy is it to “learn” a

network protocol to achieve desired goals, given a necessarily

imperfect model of the networks where it will ultimately be

deployed?

|

| July 2014: |

Anirudh Sivaraman, KW, Pauline Varley, Somak Das, Joshua Ma, Ameesh

Goyal, João Batalha, and Hari Balakrishnan, Protocol Design Contests,

SIGCOMM Computer Communications Review, July 2014.

We ran an in-class contest to develop a congestion-control

algorithm, asking 40 students in a graduate networking class to

develop protocols that would outperform Sprout. Spurred on by a live

“leaderboard,” the students submitted 3,000 candidate

algorithms that mapped a region of realizable throughput-vs.-delay

tradeoffs. The winners became co-authors on an article describing the

contest and their winning entries.

|

| May 2014: |

My doctoral dissertation: Transport Architectures for an Evolving Internet, advised by Hari Balakrishnan at the Massachusetts Institute of Technology, 2014.

|

| November 2013: |

Anirudh Sivaraman, KW, Suvinay Subramanian, and Hari Balakrishnan, No Silver Bullet: Extending SDN to the Data Plane, in HotNets 2013, College Park, Md., November 2013.

Working with my colleagues Anirudh Sivaraman and Suvinay

Subramanian, we demonstrated bidirectional cyclic preference loops

among three popular algorithms that control queueing and scheduling

behavior within a packet-switched network. Our thesis: no such scheme

can remain dominant as application objectives evolve, so routers and

switches should be programmable in this respect.

|

| August 2013: |

TCP ex Machina: Computer-Generated Congestion Control, in SIGCOMM 2013, Hong Kong, China, August 2013.

Remy is a computer program that creates TCP congestion-control

algorithms from first principles, given uncertain prior knowledge about the

network and an objective to achieve. I used to say that these computer-generated schemes

can outperform their human-generated forebears, even ones that benefit

from running code inside the network—I should have been equally emphatic that it matters a lot how the designer

specifies their assumption and how closely those assumptions are met by the real network. The real

contribution here, I think, was in the idea that the design of a CC algorithm can be the product of reinforcement learning:

a process where the emphasis is appropriately on the designer's assumptions and goals, and less-so on the mechanism. (Joint work with my advisor,

Hari Balakrishnan.)

|

| April 2013: |

Sprout: Stochastic Forecasts

Achieve High Throughput and Low Delay over Cellular Networks, in

USENIX NSDI 2013, Lombard, Ill., April 2013 (won

2014 Applied Networking Research

Prize).

We showed that on today's cellular networks, with some simple

inferential techniques it is possible to achieve 7–9× less delay than

Skype, Facetime, and Google Hangout, while achieving 2–4× the

throughput of these applications at the same time. We packaged the

evaluation into one VM and held a contest for 40 students to try to

find a better algorithm on the same conditions. We were able to match

the performance of the in-network CoDel algorithm, while operating

purely end-to-end. (Joint work with my

colleague Anirudh

Sivaraman and Hari Balakrishnan.)

|

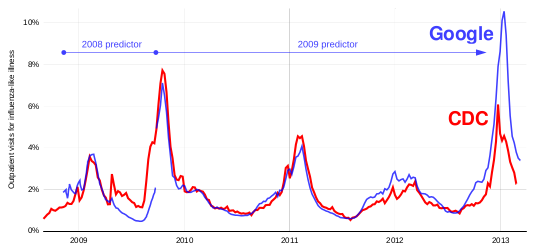

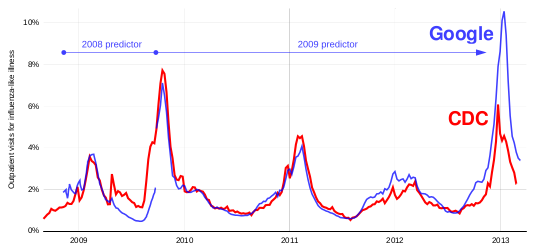

| January 2013: |

On the divergence of Google Flu Trends from the target U.S., French, and Japanese indexes in 2012–2013. Presentation slides (March 14, 2013), delivered at Children's Hospital Informatics Program | Interview 1 | Interview 2 | Radio interview

|

| June 2012: |

Mosh: An Interactive Remote Shell

for Mobile Clients, in USENIX ATC 2012, Boston, Mass.,

June 2012.

We built a novel datagram protocol that synchronizes the state of

abstract objects over a challenged, mobile network. We used this to

implement a replacement for the venerable SSH application that

tolerates intermittent connectivity and roaming, and has a predictive

local user interface. The program is in wide release with hundreds of

thousands of downloads. Joint work with Hari Balakrishnan (research) and

with Keegan McAllister, Anders Kaseorg, Quentin Smith, and Richard

Tibbetts (software).

|

| November 2011: |

End-to-End Transmission Control by Modeling Uncertainty about the Network State,

in HotNets 2011, Cambridge, Mass., November 2011.

We show it is possible to produce reasonable transmission control

from first principles and Bayesian inference, when contending only

with nonresponsive cross traffic. The workshop paper that eventually became

Remy. (Joint work with Hari Balakrishnan.)

|

| October 2009: |

False positive rate for Barnard's test for superiority at nominal 0.05 alpha (red), vs. modified test (blue) informed by prior that true value lies within shaded region. |

Developed exchange

algorithm to calculate the coverage probability and false-positive

rate of “exact” 2x2 confidence intervals and hypothesis

tests. Typically these tests (e.g., Barnard's test for superiority,

the Chan test for non-inferiority) take 5-10 minutes to run at sample

sizes of 1000x1000 in software like StatXAct. The exchange algorithm

calculates the whole tableau (all 1001x1001 outcomes) in the same

difficulty as the “hardest” single p-value or confidence

interval. Much similar work had been done for one-dimensional tests

and intervals, but the two-dimensional case had previously been

intractable.

This technique allows us to empirically test traditional statistical

rules of thumb, like the appropriateness of the chi-square test

when E[ n p ] > 5, or the notion that exact tests are

unnecessarily conservative. It also allows us to design new tests and

intervals that minimize conservatism and ripple. The above graph

shows the benefit of applying a “prior” to classical

(frequentist) inference. Barnard's test for superiority controls false

positives unconditionally (the red line is always below 0.05), but at

a cost of conservatism in the region of p=0.35. We find that if we are

able to state a region where the parameter is assumed to lie a priori,

we can produce a modified hypothesis test with better performance

inside that region.

|

| May 2006: |

English

Text Classification by Authorship and Date (class project). The

n-grams used by the U.S. Supreme Court evolve quickly enough that it's

possible to build a pretty good classifier to identify the year of

authorship of an opinion, based only on its four-letter-grams. Other

corpora, like the titles used by high-school students in their winning

entries to the Westinghouse/Intel science contest, can display some

amusing long-term trends. (Joint work with Adam Belay, Mujde Pamuk,

and Tucker Sylvestro.)

|

| January 2006: |

MIT OpenCourseWare taped my 8-hour Introduction to Copyright Law course, which I taught for the EECS department in MIT's Independent Activities Period of 2006.

|

| 2005–6: |

We were involved in some of the first

“amateur” high-definition broadcasting, which required

implementing an

ATSC-conforming

scheduler for MPEG-2 transport streams. The project is probably

most notable for producing this

three-hour video of the 2006 MIT Integration Bee. Other

videos of 2005–6 era MIT sports are

also available.

|

| October 2004: |

Created the Library Access to Music

Project, which served as MIT's open-access electronic music library

2004 to 2016. (Joint work with Josh Mandel.) Engineering a Campus-Wide Accessible Music Library (MIT master's thesis, 2005). Coverage in NYT | USA Today | Boston Globe | NPR Morning Edition | Fark.

|

| March 2004: |

Broke the

encryption on the Motorola (now Indala) FlexSecur system of RFID

cards in use at MIT. (Joint work with Austin Roach and Josh Mandel.)

|

| December 2003: |

Improving 802.11 Range with Forward Error Correction, CSAIL AI Memo 2005-004, February 2005. Added forward error correction to Wi-Fi, extending range by 70 percent. (Joint work with Reina Riemann.)

|

| May 2002: |

Analysis of

Boston Local Television News Story Selection Bias,

1993–2002. Local news programs prefer to cover entertainment

news relating to prime-time TV shows from their own national

network. Shifts in the affiliation of a TV station can produce a

dramatic change in the news judgment of its local news program,

e.g. when WHDH-TV switched from CBS to NBC in 1995. WHDH’s news

director: "Why would you want to give publicity to a competitor?"

|

| March 2001: |

qrpff DVD

descrambler , written for

an IAP

seminar at MIT on DVD and the Digital Millennium

Copyright Act, joined by DVD-CCA representative David

Barr, Harvard Law School's Jonathan Zittrain, and MIT's

Hal Abelson. (Joint work with Marc Horowitz.) Eventually,

in 2015, the “algorithm” was sold at a

charity art auction to benefit the Cooper Hewitt

Smithsonian Design Museum. Coverage

by CNET

| IDG

| Wired

| The Tech

| New

Yorker | Wall

Street Journal.

|

| January 2000: |

In 2000, I took over the job of MIT Infinite Corridor Astronomer from Ken Olum. We later captured the “MIThenge” phenomenon on video and improved

the accuracy of the predictions. It turns out most models of atmospheric

refraction don't work well within <0.8 degrees of the horizon. Strangely, real astronomers rarely find this to be a big problem... |

| August 1999: |

New frontiers in optical character recognition, recognized by the prestigious Obfuscated Perl Contest. |

| December 1998: |

The first

automated linguistic

steganography.

|