Drew A. Hudson

dorarad [at] cs.stanford.edu [scholar] [github] [linkedin] [twitter]

Hi! My name is Drew, I am a Research Scientist at DeepMind, and have recently completed my PhD in Computer Science at Stanford University. I was fortunate to work with my advisor Prof. Christopher D. Manning and collaborate with Dr. Larry Zitnick from FAIR, Meta AI and with Prof. James L. McClelland. I was a member of the Stanford AI Lab and the Stanford NLP group. My research focuses on reasoning, compositionality, and representation learning, at the intersection of vision and language.

I explore structural principles and inductive biases for making neural networks more interpretable, robust and data-efficient, and allow them to generalize effectively and systematically from a few samples only. I believe in the importance of multi-disciplinary both within the AI field and across domains, and draw high-level inspiration from the feats of the human mind, including its structural properties as well as cognitive capabilities.

I believe that compositionality is a key ingredient that, if incorporated successfully into neural models, may help bridging the gap between machine intelligence and natural intelligence. I explore ways to achieve compositionality both in terms of computation and representation.

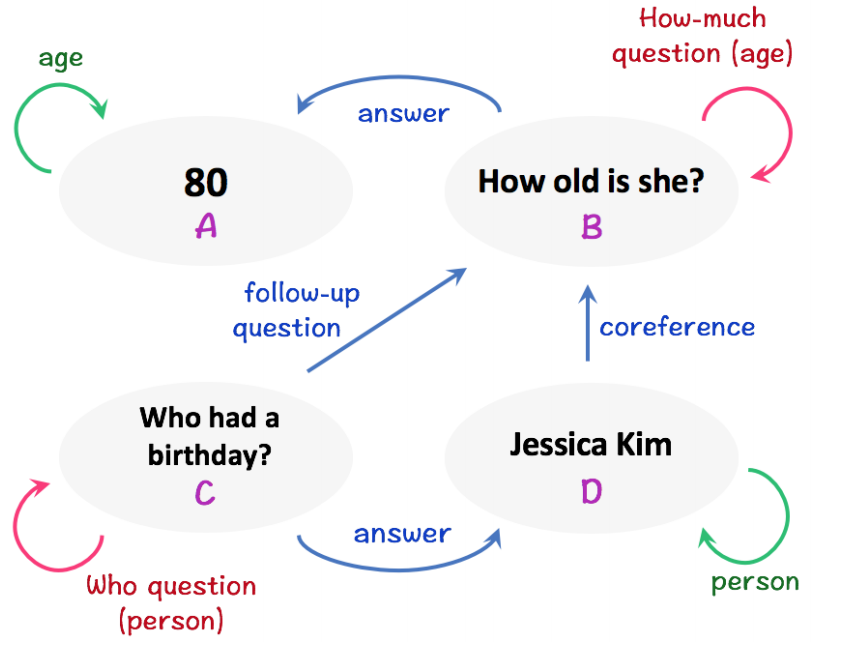

Towards the former, I introduced, together with my advisor, models such as MAC and the Neural State Machine that perform transparent step-by-step reasoning, as well as the GQA dataset for real-world visual question answering.

Towards the latter, I began more recently to explore ways to learn compositional scene representations, and along with Larry, presented the Generative Adversarial Transformers, for fast, data-efficient and high-resolution image synthesis. I am actively researching this subject further and hope to present new findings on this exciting direction in the near future!