|

I am a fifth-year Ph.D. candidate in Computer Science at Cornell University, extremely fortunate to be advised by Prof. Jon Kleinberg. I am currently visiting Stanford Computer Science as an IvyPlus Scholar for academic year 2025-26. I am also affiliated with Google Research as a student researcher. I obtained my M.S. in Computer Science from Stanford University, where I worked with Prof. Jure Leskovec. I received my B.S. in Computer Science and Mathematics, graduating Summa Cum Laude (top 1%), from Hong Kong University of Science and Technology. I am serving as a General Chair for the Learning on Graphs (LoG) Conference 2026 and 2025. I visited the ALFA Group at MIT CSAIL in 2018, and interned at Meta AI in 2024, 2025 and Microsoft Research in 2023. My name is pronounced "Yen-bahng Wong". Google Scholar/ CV / Email / LinkedIn / Twitter |

|

|

I research how to improve LLM's reasoning ability on relation-rich structures, with main topics in graph-language models, text-to-SQL generation (including its foundation models & coding agents), as well as LLM for recommendation systems. |

|

|

|

|

|

Yanbang Wang, Hejie Cui, Jon Kleinberg Neural Information Processing Systems (NeurIPS) [preprint] Accurate graph recall from text is essential for LLMs to perform reasoning tasks involving graph-structured information. While human graph recall has been studied extensively and shows structural biases aligned with social relationships, little is known about how LLMs handle similar tasks. This work presents the first systematic study of graph recall by LLMs, revealing that they often underperform and tend to favor microstructures like triangles and alternating 2-paths. Additionally, more advanced LLMs show improved recall accuracy when the graph is narrated in a language style consistent with its original domain. |

|

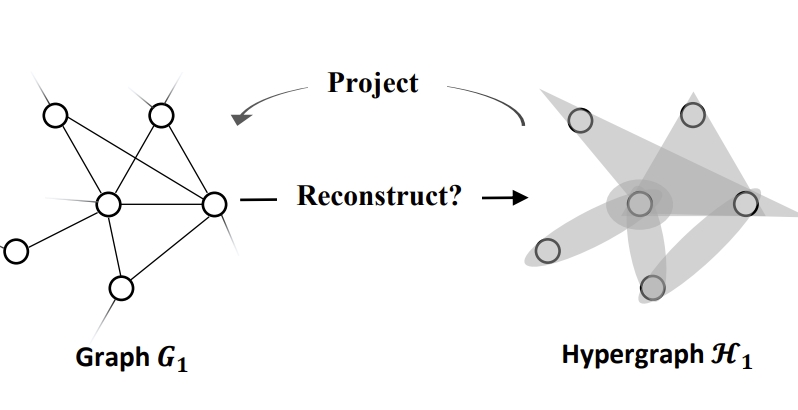

Yanbang Wang, Jon Kleinberg International Conference on Learning Representations (ICLR) [preprint] We study the implications of the modeling choice to use a graph, instead of a hypergraph, to represent real-world interconnected systems whose constituent relationships are of higher order by nature. Such a modeling choice typically involves an underlying projection process that maps the original hypergraph onto a graph, and is common in graph-based analysis. While hypergraph projection can potentially lead to loss of higher-order relations, there exists very limited studies on the consequences of doing so, as well as its remediation. |

|

Yanbang Wang, Jon Kleinberg Neural Information Processing Systems (NeurIPS) [preprint] Link recommendations in online social networks help users discover connections, enhancing engagement. However, the added links can also escalate conflicts and polarization. There's limited understanding of the relationship between these two outcomes. This work provides one of the first rigorous analysis of this question, using the recently popular Friedkin-Johnsen model of opinion dynamics. |

|

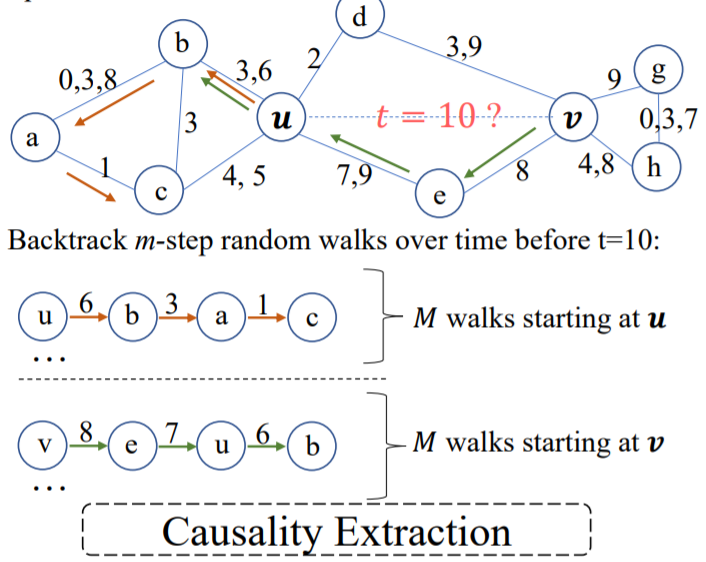

Yanbang Wang, Yen-Yu Chang, Yunyu Liu, Jure Leskovec, Pan Li International Conference on Learning Representations (ICLR) [preprint] [project page] [code] We propose Causal Anonymous Walks (CAWs) for inductive representation learning in temporal networks. CAWs are extracted by temporal random walks and work as automatic retrieval of temporal network motifs to represent network dynamics, while avoiding the time-consuming selection and counting of those motifs. CAWs adopt a novel anonymization strategy that replaces node identities with the hitting counts of the nodes based on a set of sampled walks to keep the method inductive, and simultaneously establish the correlation between motifs. CAWs is the SOTA method on both transductive and inductive link prediction tasks in temporal networks. |

|

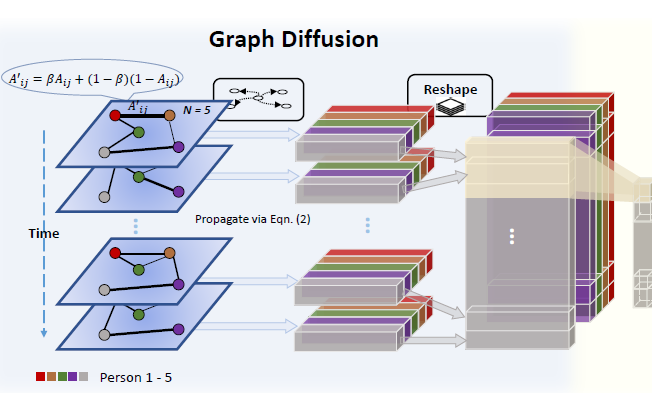

Yanbang Wang, Pan Li, Chongyang Bai, Jure Leskovec The World Wide Web Conference (WWW) [preprint] [project page] [KDD'20 Workshop spotlight video] We propose a novel framework, termed Temporal Network-diffusion Convolutional Networks (TEDIC), for generic representation learning on dynamic social interaction networks. TEDIC adopts diffusion of node attributes over a combination of the original network and its complement to capture long-hop interactive patterns embedded in the behaviors of people making or avoiding contact. It also leverages temporal convolution networks with hierarchical set-pooling operation to flexibly extract patterns from different-length interactions scattered over a long time span. TEDIC is evaluated over four social character prediction tasks: detecting deception detection, dominance, nervousness detection and community. It consistently outperforms previous SOTAs and provides interesting social insights. |

|

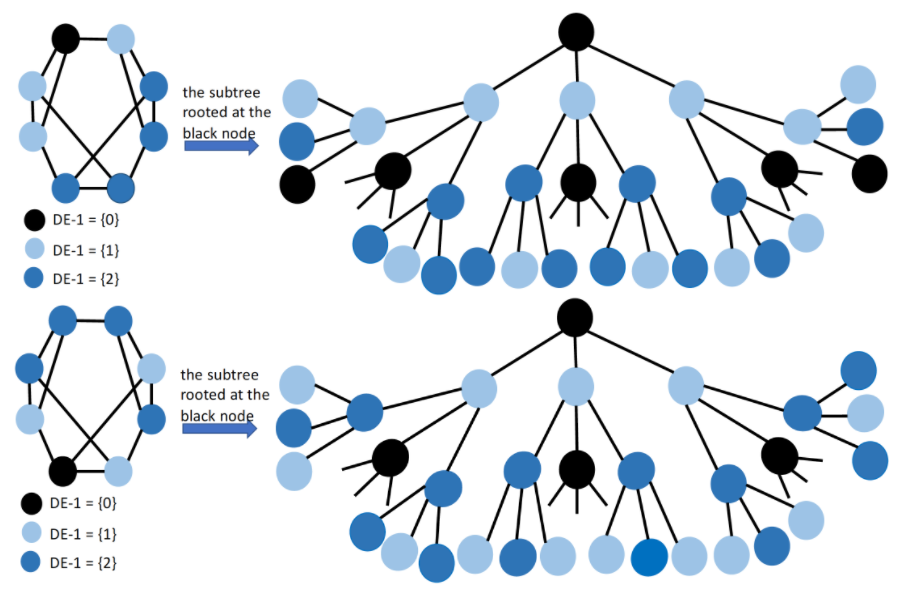

Pan Li, Yanbang Wang, Jure Leskovec Neural Information Processing Systems (NeurIPS) [paper] [project page] [code] We propose and mathematically analyze a general class of structure-related features, termed Distance Encoding (DE). DE assists GNNs in representing any set of nodes, while providing strictly more expressive power than the 1-Weisfeiler-Lehman test. We also prove that DE can distinguish node sets embedded in almost all regular graphs where traditional GNNs always fail. |

|

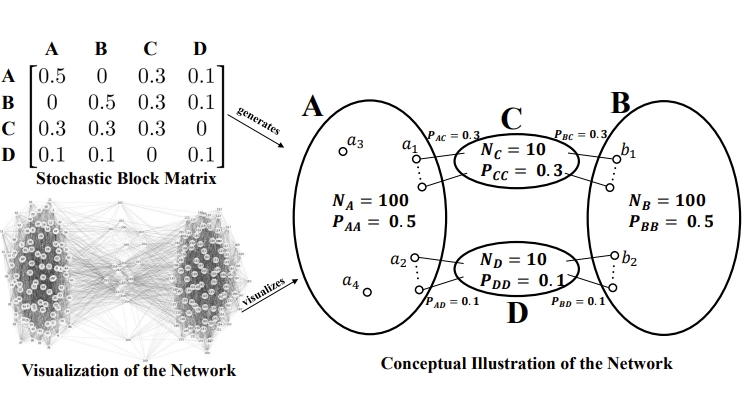

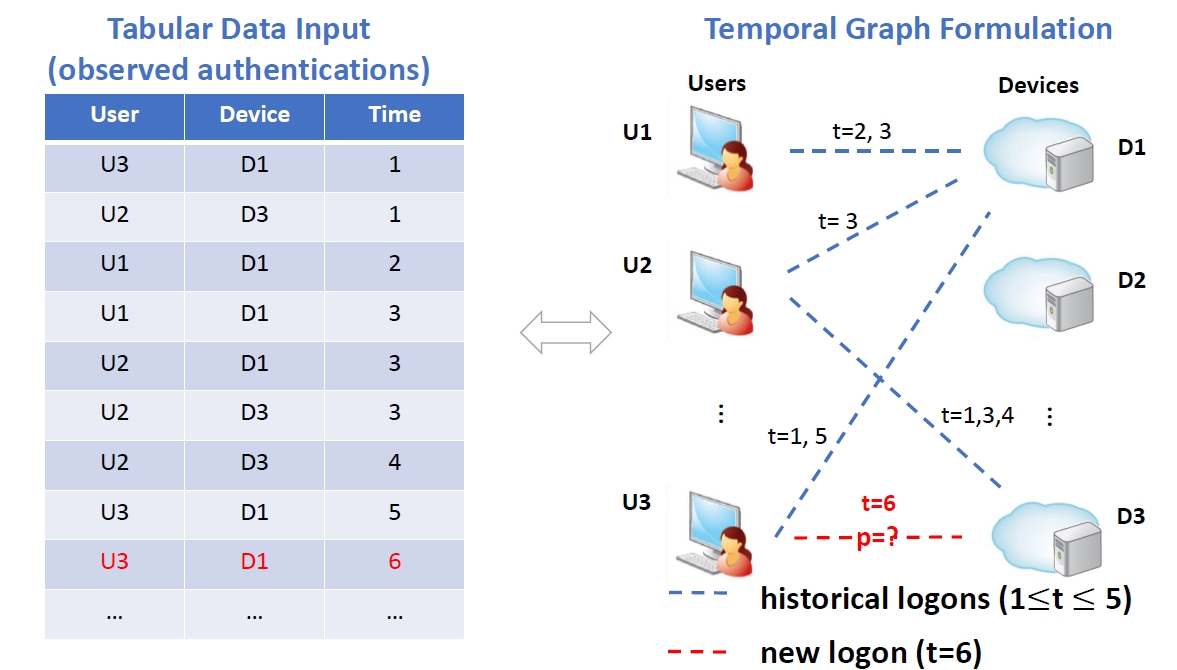

Yanbang Wang, Karl Hallgren, Jonathan Larson The World Wide Web Conference (WWW) [to appear] (work done during internship at Microsoft Research). The high false positive (FP) rate of authentication alerts remains to be a prominent challenge in cybersecurity nowadays. We identify two problems that cause this issue, which are unaddressed in existing learning-based anomaly detection methods. We address these problems by proposing a new framework based on self-supervised link prediction on dynamic authentication networks. We validate our framework on 4 months of authentication data of 125 randomly selected, real organizations that subscribe to Microsoft's defense services. Our model is intended to be integrated into Microsoft Security products. |

|

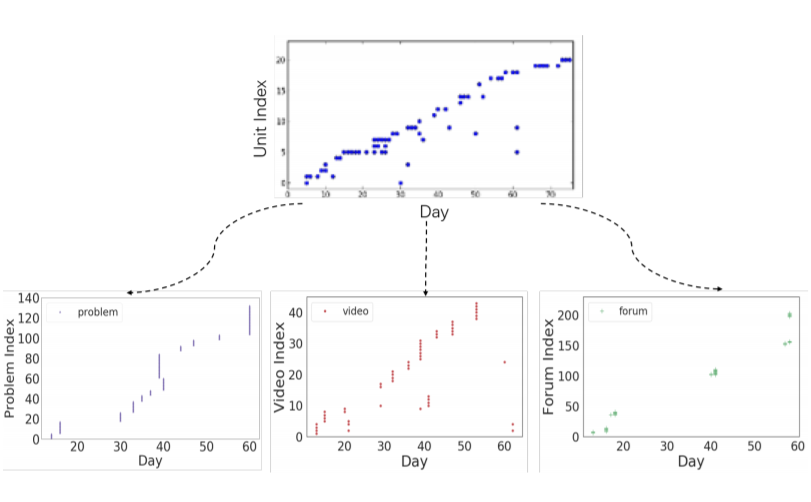

Yanbang Wang, Nancy Law, Erik Hemberg, Una-May O'Reily International Conference on Learning Analytics and Knowledge (LAK) [paper] [project page] We present a new organization of MOOC learner activity data at a resolution that is in between the fine granularity of the clickstream and coarse organizations that count activities, aggregate students or use long duration time units. A detailed access trajectory (DAT) consists of binary values and is two dimensional with one axis that is a time series, and the other that is a chronologically ordered list of a MOOC component type's instances, videos in instructional order, for example. Four empirical mini-studies suggest that DATs contain rich information about students' learning behaviors and facilitate MOOC learning analyses. |

|

|

| Organizing committee: LoG |

| PC member/reviewer: NeurIPS, ICLR, ICML, KDD, WebConf, AISTATS |

|

|