Badminton

Make a choice to reveal the answer.

1Stanford University 2Peking University

Watch each pair and choose which video was generated by AI. HumanScore targets the subtle biomechanical violations that often reveal the answer.

Make a choice to reveal the answer.

Make a choice to reveal the answer.

Make a choice to reveal the answer.

Recent advances in model architectures, compute, and data scale have driven rapid progress in video generation, producing increasingly realistic content. Yet, no prior method systematically measures how faithfully these systems render human bodies and motion dynamics.

We present HumanScore, a systematic framework to evaluate the quality of human motions in AI-generated videos. HumanScore defines six interpretable metrics spanning kinematic plausibility, temporal stability, and biomechanical consistency, enabling fine-grained diagnosis beyond visual realism alone.

Through carefully designed prompts, we elicit a diverse set of movements at varying intensities and evaluate videos generated by thirteen state-of-the-art models. Our analysis reveals consistent gaps between perceptual plausibility and motion biomechanical fidelity, identifies recurrent failure modes, and produces robust model rankings from quantitative and physically meaningful criteria.

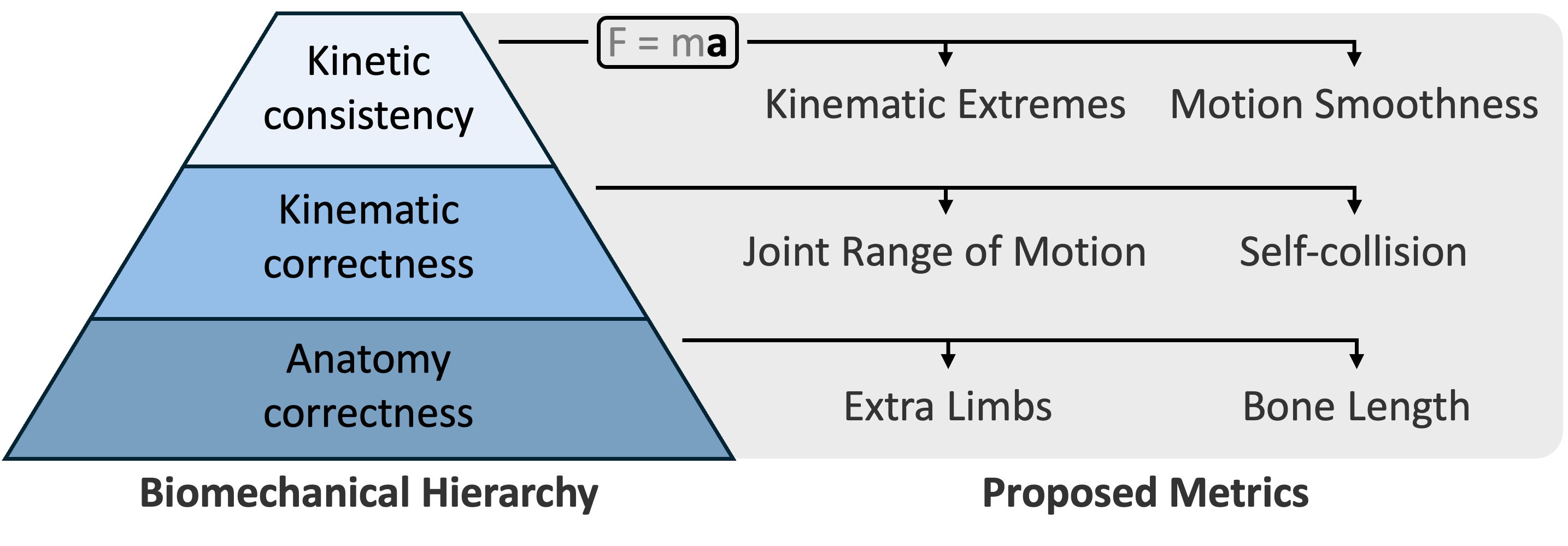

HumanScore follows a biomechanics hierarchy: anatomical correctness, kinematic correctness, and kinetic correctness. Each tier is evaluated with two independent metrics.

Detects anatomically impossible duplicate arms, legs, hands, feet, or ghost-like body segments.

Measures whether body segment lengths remain stable through time under a fitted skeleton.

Penalizes poses that exceed physiologically plausible joint limits and hyperextension bounds.

Finds impossible interpenetration between non-adjacent body parts in reconstructed meshes.

Flags unnatural velocity spikes in joint angular motion and body segment movement.

Measures excessive acceleration and jerk that appear as jitter, stutter, or temporal discontinuity.

The final benchmark contains 51 motion types, balanced across three difficulty levels, with gentle and intense variants for each motion type.

* Higher scores indicate better biomechanical plausibility.

| Rank | Model | Availability | Anatomy | Kinematic | Kinetic | Overall |

|---|---|---|---|---|---|---|

| 1 |  Seedance 1.0 Pro fast Seedance 1.0 Pro fast | Proprietary | 93.9 | 84.7 | 94.3 | 91.1 |

| 1 |  HunyuanVideo 1.5 HunyuanVideo 1.5 | Open source | 95.3 | 83.0 | 94.9 | 91.1 |

| 3 |  KlingAI 2.5 Turbo Pro KlingAI 2.5 Turbo Pro | Proprietary | 91.0 | 86.4 | 95.1 | 90.8 |

| 4 |  Ray 3.0 Ray 3.0 | Proprietary | 86.7 | 82.5 | 93.8 | 87.7 |

| 5 | Open source | 86.7 | 80.7 | 92.1 | 86.5 | |

| 6 |  Sora-2 Sora-2 | Proprietary | 90.8 | 78.2 | 89.4 | 86.1 |

| 6 |  Wan 2.2 Wan 2.2 | Open source | 94.0 | 78.8 | 85.6 | 86.1 |

| 8 |  Veo 3.1 fast Veo 3.1 fast | Proprietary | 84.6 | 79.8 | 93.3 | 85.9 |

| 9 |  Hailuo 02 Hailuo 02 | Proprietary | 89.1 | 77.1 | 91.2 | 85.8 |

| 10 |  PixVerse 5.5 PixVerse 5.5 | Proprietary | 87.0 | 78.6 | 91.0 | 85.5 |

| 11 |  Wan 2.6 Wan 2.6 | Proprietary | 89.6 | 78.0 | 86.8 | 84.8 |

| 12 |  Pika v2.2 Pika v2.2 | Proprietary | 88.2 | 74.8 | 81.8 | 81.6 |

| 13 | Open source | 73.8 | 64.3 | 86.3 | 74.8 |

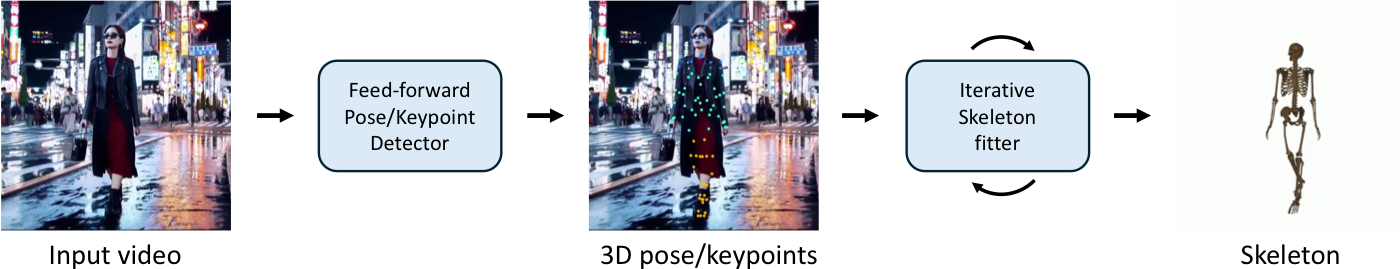

Metrics II, III, V, and VI depend on a biomechanics-aware skeleton fitting pipeline. We first infer 87 anatomical 3D keypoints from monocular observations, then optimize a physics-based human skeletal model to match those keypoints and export an OpenSim-compatible trajectory for downstream analysis.

Each row shows one provided good case and one bad case for the same model. This makes it easier to see where the fitting pipeline remains stable and where unrealistic motion starts to break down.

@misc{fang2026humanscorebenchmarkinghumanmotions,

title={HumanScore: Benchmarking Human Motions in Generated Videos},

author={Yusu Fang and Tiange Xiang and Tian Tan and Narayan Schuetz and Scott Delp and Li Fei-Fei and Ehsan Adeli},

year={2026},

eprint={2604.20157},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2604.20157},

}