Abstract

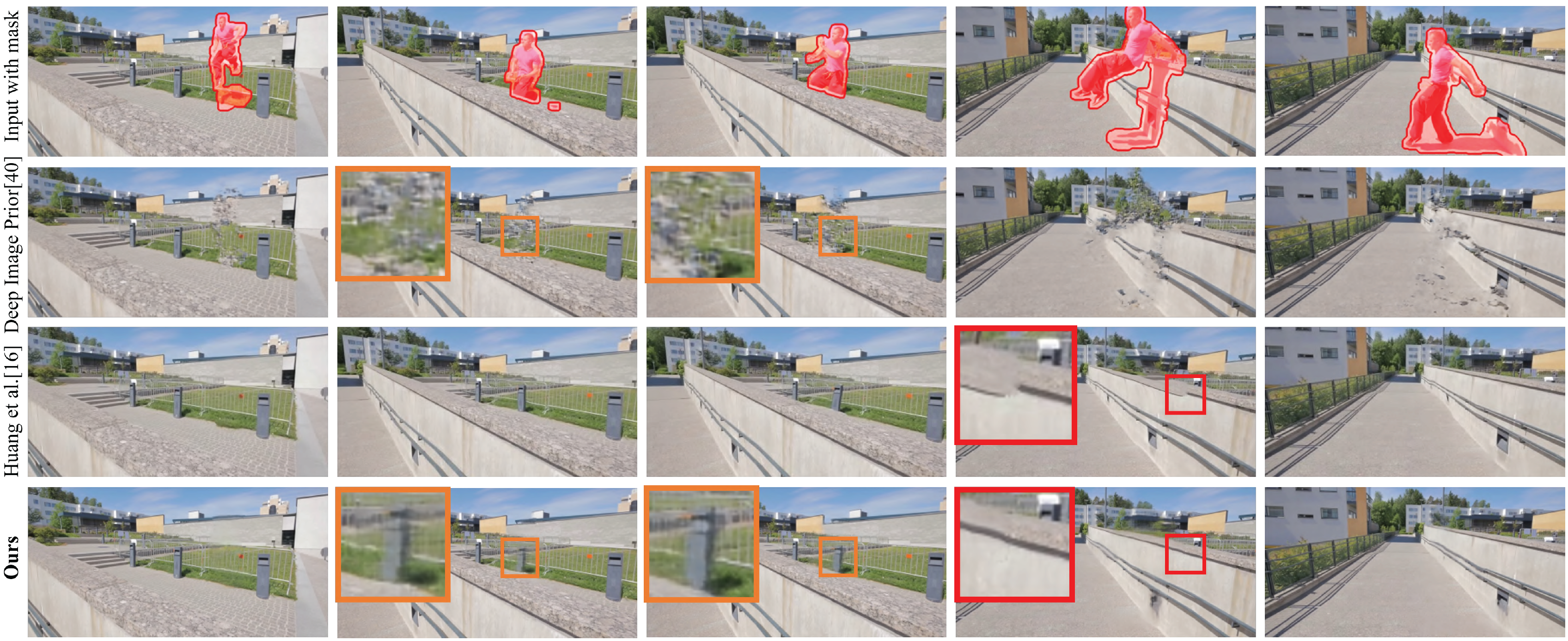

We propose a novel video inpainting algorithm that simultaneously hallucinates missing appearance and motion (optical flow) information, building upon the recent `Deep Image Prior' (DIP) that exploits convolutional network architectures to enforce plausible texture in static images. In extending DIP to video we make two important contributions. First, we show that coherent video inpainting is possible without a priori training. We take a generative approach to inpainting based on internal (within-video) learning without reliance upon an external corpus of visual data to train a one-size-fits-all model for the large space of general videos. Second, we show that such a framework can jointly generate both appearance and flow, whilst exploiting these complementary modalities to ensure mutual consistency. We show that leveraging appearance statistics specific to each video achieves visually plausible results whilst handling the challenging problem of long-term consistency.

Supplemental Video

Oral Video (ECCV 2020 workshop)

Citation

@inproceedings{zhang2019internal,

title={An Internal Learning Approach to Video Inpainting},

author={Zhang, Haotian and Mai, Long and Xu, Ning and Wang, Zhaowen and Collomosse, John and Jin, Hailin},

booktitle={Proceedings of the IEEE International Conference on Computer Vision},

pages={2720--2729},

year={2019}

}

Contact

For any question regarding this research paper, please contact Haotian Zhang via haotianz@cs.stanford.edu.

|

Template adapted from GLAMR. |